Google has released a new family of open-weight AI models designed for local use across consumer devices, from workstations to smartphones. The Gemma 4 series, built on the Gemini 3 architecture, includes four variants optimized for different hardware and workloads. The most accessible options are Effective 2B and Effective 4B, which are compact enough to run offline on mobile phones, Raspberry Pi boards, and Nvidia Jetson Nano systems.

The 26B Mixture of Experts and 31B Dense models sit at the higher end and require substantial compute capacity. According to Google, running them in unquantized bfloat16 format calls for an 80GB Nvidia H100 GPU.

During inference, the 26B model activates only 3.8 billion of its 26 billion parameters. This sparse behavior improves tokens-per-second performance and reduces latency relative to comparable models. The 31B model, meanwhile, emphasizes output quality and supports fine-tuning for specialized use cases.

Google describes the larger models as bringing “frontier intelligence” to personal computers. They are intended for students, researchers, and developers who need advanced reasoning for IDEs, coding assistants, and agentic workflows.

The Effective 2B and Effective 4B models are the most relevant for everyday users. Both operate entirely offline and use relatively little memory during inference, due to their smaller 2 billion and 4 billion parameter sizes.

Google notes that lowering the number of active parameters enables deployment on mobile and IoT-class hardware, such as smartphones, Raspberry Pi boards, and Jetson Nano platforms.

Google states that the Gemma 4 models are significantly faster than their predecessors and represent the most capable AI systems built for local hardware. Independent testing appears to support the assertion.

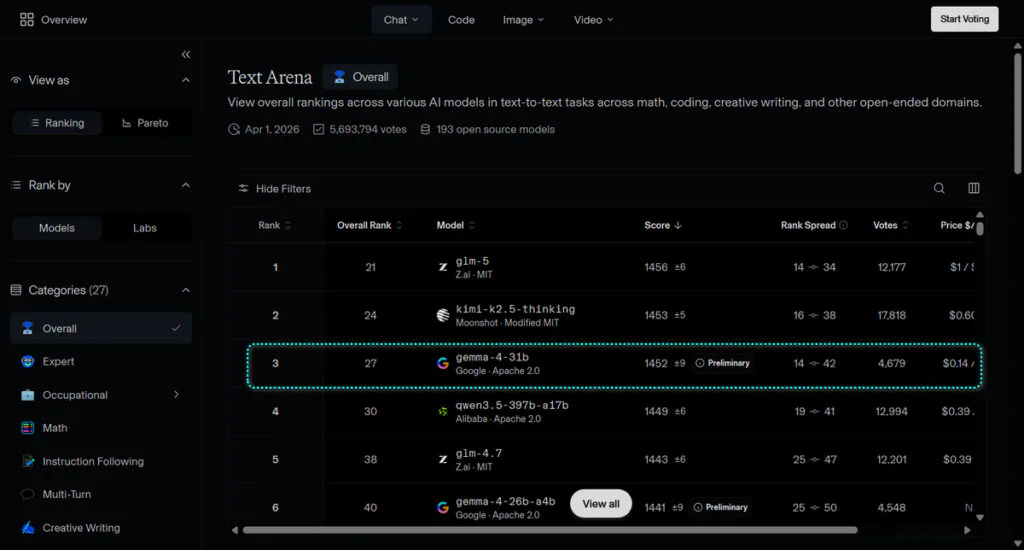

According to the most recent Arena AI open-model leaderboard, the 31B Gemma 4 secures third place, trailing GLM-5 and Kimi 2.5, while the 26B variant sits at sixth.

Gemma 4 is released under an Apache 2.0 license, unlike its predecessor. This allows developers to use and integrate the models without usage restrictions. In comparison, Gemma 3 relied on a custom Google license with stricter policies and several limitations, making it less appealing.

Although Gemma 4 uses a permissive Apache 2.0 license, Google describes it as an “open-weight” model rather than fully open-source. The Open Source Initiative states that a model is only truly open-source if the complete training dataset, scripts, infrastructure code, and methodologies are published.

Google is providing the model parameters without the full reproducible training pipeline, which prevents others from rebuilding the model from scratch. For most developers, this limitation is unlikely to be significant. The Apache 2.0 license still allows commercial use, modification, redistribution, and deployment, with attribution required.

Maybe you would like other interesting articles?