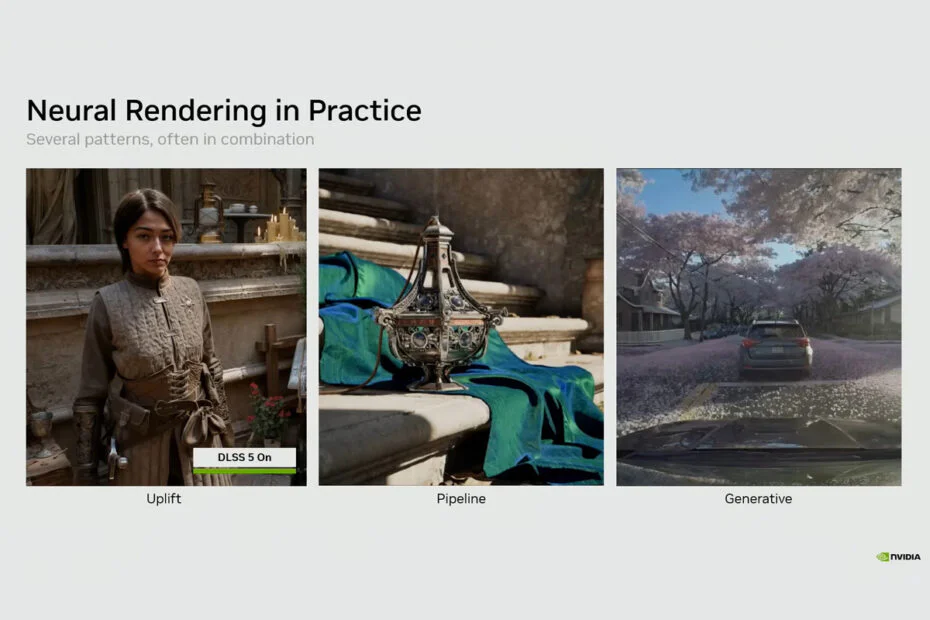

A newly released technical deep dive details how neural textures and materials may alter core game development workflows. Nvidia’s neural rendering is moving beyond stage demos, with follow-up briefings focused on real-engine integration. In a video published days after the DLSS 5 reveal, the company outlines how the system operates internally. It points to a more subtle shift: compressing texture and material assets into compact neural representations to lower memory overhead and improve performance, rather than relying mainly on post-process upscaling.

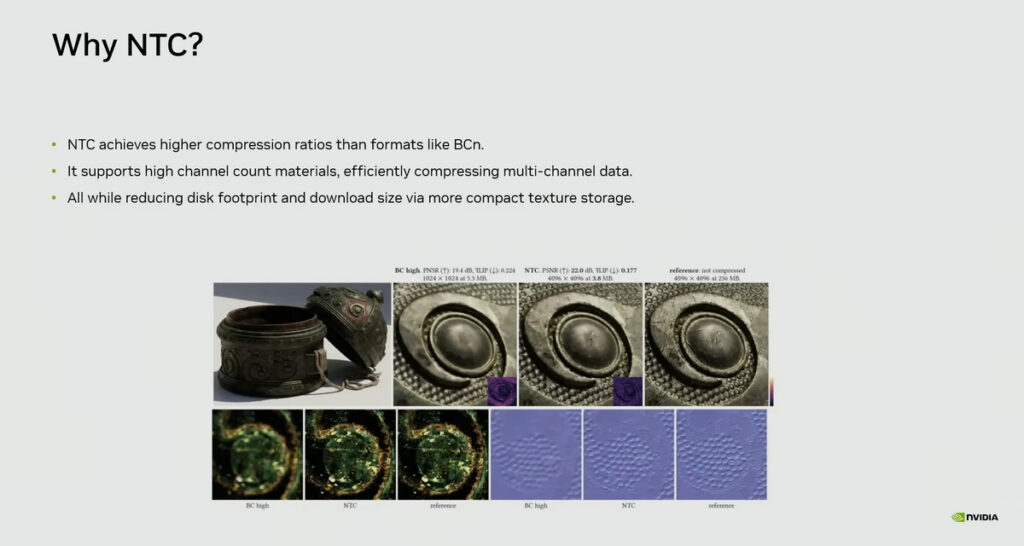

Take Nvidia’s Neural Texture Compression (NTC) as an example. In the “Tuscan Wheels” demo, VRAM usage fell from about 6.5GB with traditional BCN-compressed textures to roughly 970MB with NTC, while image quality remained close to the source.

At the same 970MB memory footprint, NTC retained more visual detail than conventional block compression. The approach could lead to smaller game installations, lighter update patches, lower download bandwidth requirements, and additional headroom for higher-quality assets on the same GPU.

For studios struggling with oversized texture data, that kind of reduction is genuinely useful in a way another reconstruction step just isn’t.

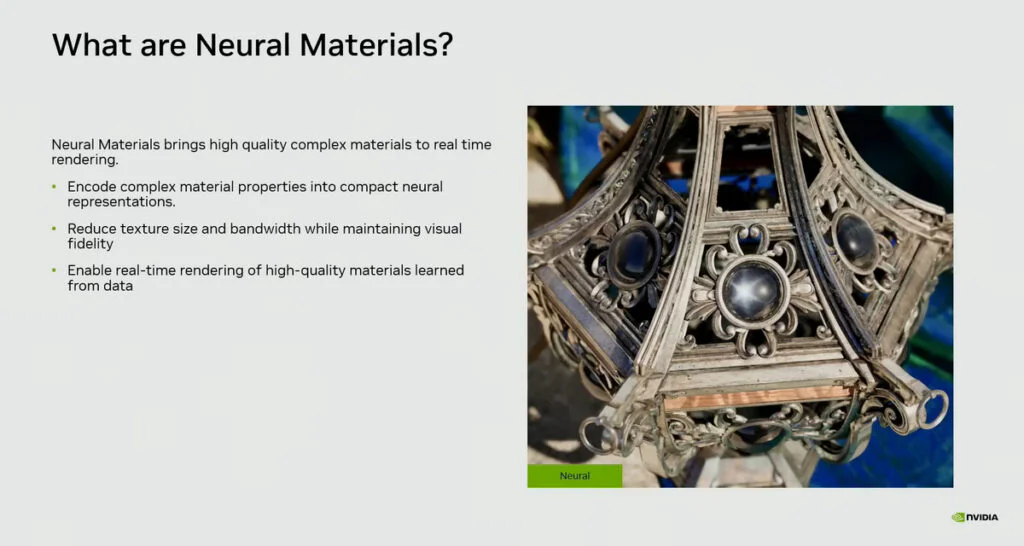

Neural Materials (NM) extends the concept to the shading pipeline. Rather than maintaining multiple texture channels and evaluating computationally heavier BRDF models, Nvidia encodes material response into a compact latent representation that a lightweight neural network reconstructs at render time.

In one example, a material setup with 19 channels was reduced to eight, with Nvidia reporting 1.4x to 7.7x faster 1080p render times in that scene. The company frames this work less as a way to invent new visuals and more as a method for storing and evaluating existing material data more efficiently, allowing for greater scene complexity within the same hardware budget.

Together, these approaches align with a broader neural rendering roadmap that moves beyond DLSS 5. DLSS 5 targets the end of the pipeline, applying machine learning to the final frame, while Nvidia’s latest technical briefings focus on embedding lightweight neural networks deeper into engine subsystems.

The goal is to use compact models for specific tasks, including texture decoding, material evaluation, and reducing memory traffic, rather than depending on a single monolithic filter at the end of the frame.

The direction also highlights a growing divide that has emerged since DLSS 5’s debut. Some developers and players remain cautious about AI-driven reconstruction overriding artistic intent, and instead favor using AI for optimization, image quality, and performance without altering a game’s visual identity.

Maybe you would like other interesting articles?