Google’s Threat Intelligence Group (GTIG) has uncovered what it calls the first-known instance of a zero-day exploit developed with the help of artificial intelligence, marking a new frontier in AI-assisted cybercrime.

The exploit, which targeted a vulnerability unknown to the affected company, was being prepared for what Google described as a “mass exploitation event.” The company said its proactive discovery may have prevented the attack from ever taking place. Google didn’t name the intended victim but confirmed it had alerted the organization, which has since patched the security flaw.

Zero-day vulnerabilities are particularly dangerous because they are not yet known to software makers or the public, leaving exactly zero days to defend against them. In this case, Google expressed “high confidence” that an AI model played a role in both finding the vulnerability and crafting the weaponized exploit. The company doesn’t believe its own Gemini models were used.

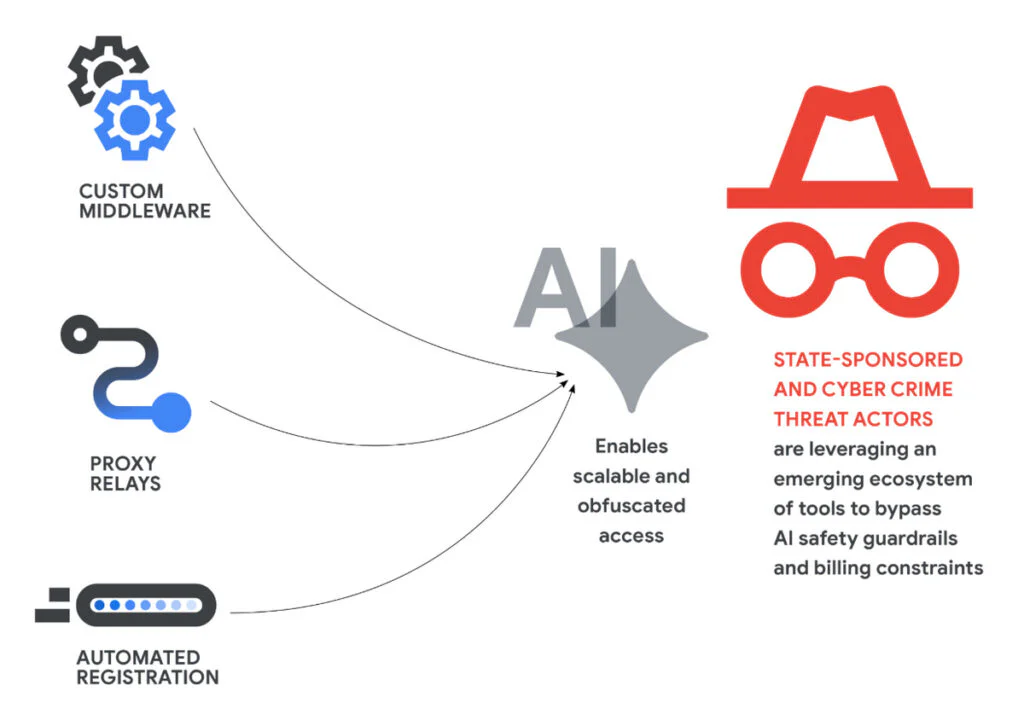

While Google withheld the identity of the threat actor, it noted that groups linked to China and North Korea have shown “significant interest” in employing AI to uncover and exploit security weaknesses. The report stopped short of attributing this specific incident to any particular nation-state group.

In an interview with The New York Times, John Hultquist, chief analyst at GTIG, described the discovery as “a taste of what’s to come” and “the tip of the iceberg,” calling it the first “tangible evidence” of such AI-generated attacks. The remarks underscore a growing unease among security professionals that the same rapid advances fueling everyday AI tools are also lowering the barrier for malicious actors.

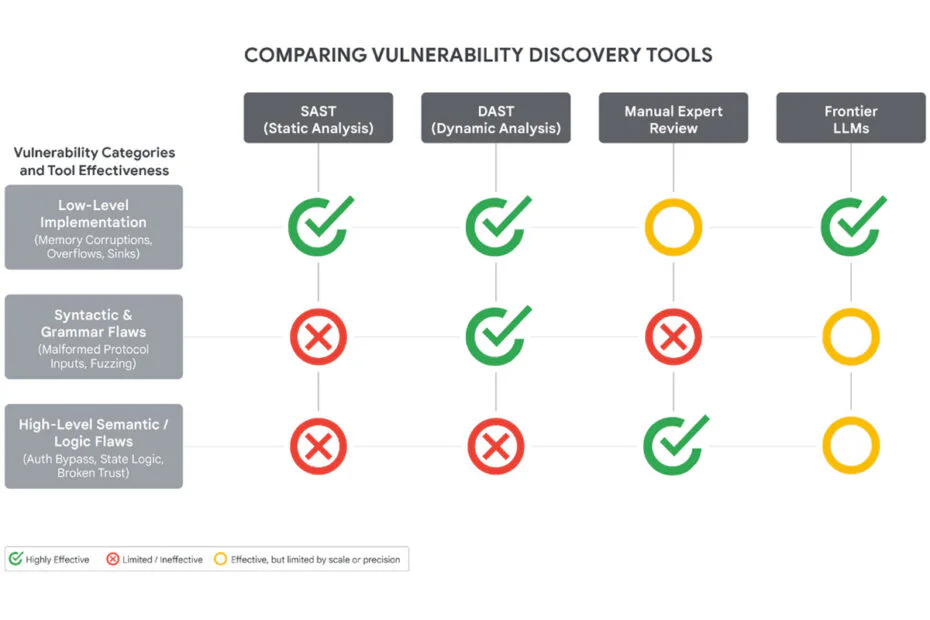

Google’s report acknowledged that threat actors already leverage AI during various stages of cyberattacks, but emphasized that the technology cuts both ways. “AI can also be a powerful tool for defenders,” the company stated. That sentiment is shared elsewhere in the industry. Last month, Anthropic launched Project Glasswing, an initiative that uses its Claude Mythos Preview model to identify and defend against high-severity vulnerabilities before they can be exploited.

The GTIG findings offer tangible evidence that AI is beginning to accelerate cyber threats, while emphasizing the parallel race among offensive and defensive security groups to operationalize the technology first.

Maybe you would like other interesting articles?