AMD has revealed OpenClaw, a platform aimed at enabling developers to deploy sophisticated AI agents locally on consumer-grade systems, reducing dependence on cloud infrastructure.

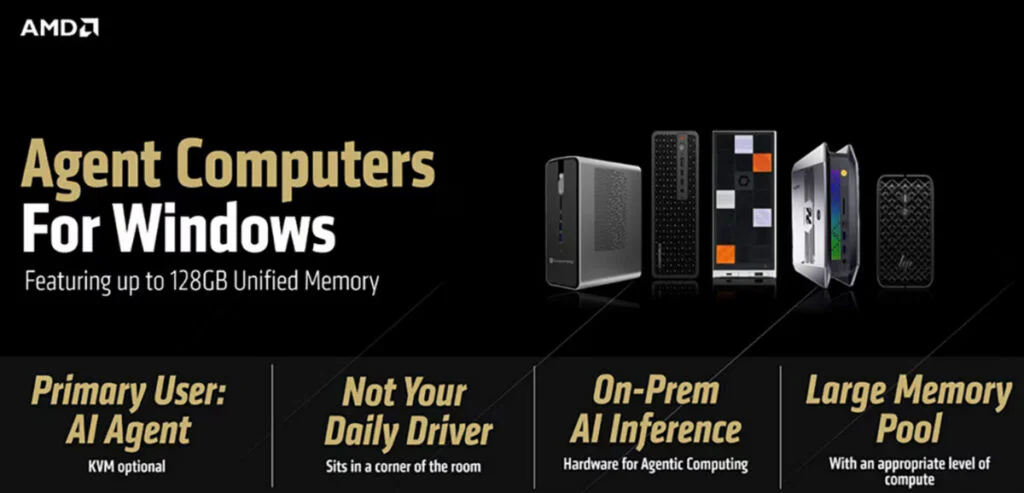

The project is part of AMD’s broader “Agent Computer” strategy, which suggests that the future of AI will not rely solely on remote data centers. In this vision, AI assistants run in user-controlled environments, operating continuously while reducing network dependence, subscription costs, and privacy concerns.

With OpenClaw, AMD is attempting to translate its concept into a developer-ready platform. The system operates on Windows through WSL2, while LM Studio, powered by the llama.cpp backend, handles local inference. This architecture enables large models like Qwen 3.5 35B A3B to run directly on consumer hardware.

To maintain continuity without cloud synchronization, OpenClaw uses Memory.md, an embedding-based memory framework that stores contextual data locally on the device. AMD presents the release as a step-by-step guide for developers who want to configure a complete OpenClaw environment on Windows for testing AI agent architectures.

AMD has outlined two separate hardware configurations for OpenClaw, each designed to address different performance needs.

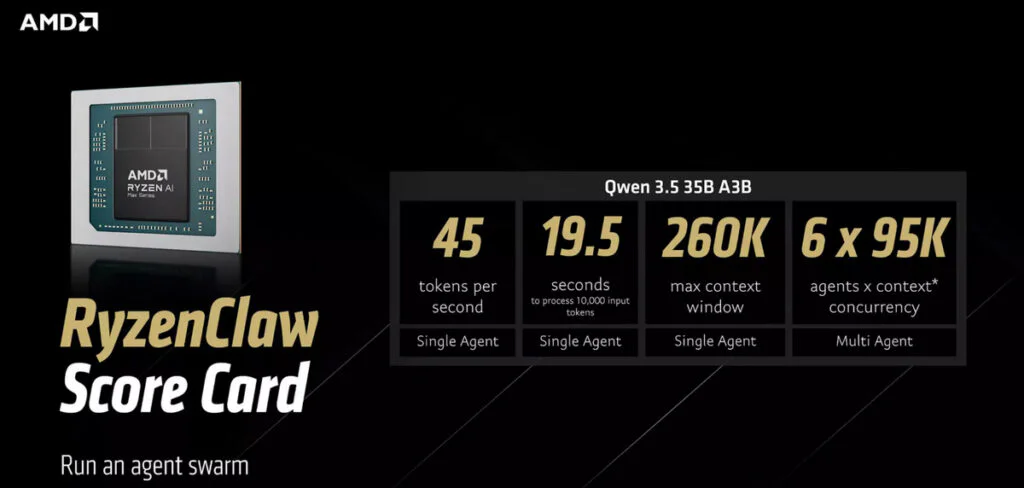

The first configuration, called RyzenClaw, is based on AMD’s Ryzen AI Max+ processor and 128GB of unified memory. AMD suggests dedicating about 96GB of that memory to variable graphics allocation to support efficient LLM inference. Under these conditions, the Qwen 3.5 35B A3B model can generate roughly 45 tokens per second and handle a 10,000-token prompt in around 19.5 seconds. With a context window reaching 260,000 tokens, AMD says the system is capable of running up to six local AI agents simultaneously.

The second configuration, called RadeonClaw, moves most of the computational work to a discrete GPU, the Radeon AI PRO R9700. Equipped with 32GB of dedicated VRAM, the workstation card delivers a substantial jump in performance. Running the same model, throughput climbs to about 120 tokens per second, cutting the time required to process 10,000 tokens to roughly 4.4 seconds.

That performance boost comes with compromises. The maximum context window drops to 190,000 tokens, and the system can run only two agents at the same time. The trade-off shows AMD’s broader strategy of offering different tuning options, letting developers prioritize either deeper context or faster inference depending on their workload.

AMD notes that neither configuration is designed for everyday users. A desktop built around the Ryzen AI Max+ 395 processor with 128GB of memory, such as a Framework Desktop configuration, starts at around $2,700. The RadeonClaw option increases the cost further, as the Radeon AI PRO R9700 GPU alone is priced at roughly $1,299.

For now, the company concedes that OpenClaw targets early adopters and engineers experimenting with local AI agents rather than mainstream consumers.

Maybe you would like other interesting articles?