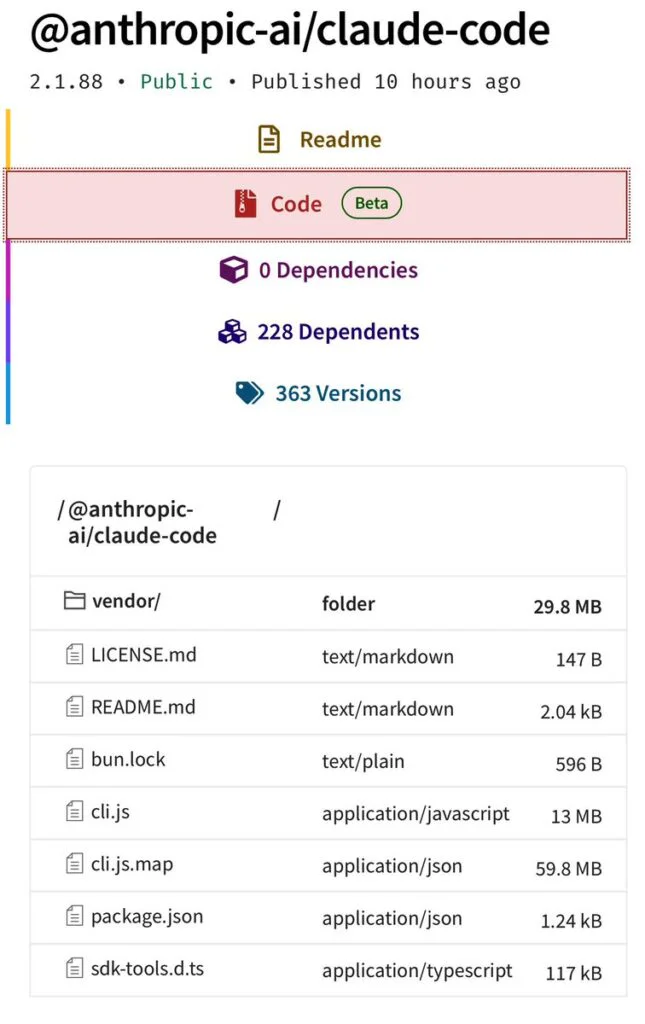

Anthropic is getting fresh attention from AI and cyber security folks after some internal Claude Code source code briefly showed up on npm. The leak gave outsiders a look at how the tool works behind the scenes and happened at the same time as a separate supply-chain issue involving the Axios JavaScript library, which made teams using Claude Code a bit uneasy. The problem came from version 2.1.88 of the @anthropic-ai/claude-code package, which accidentally included a 59.8MB JavaScript source map meant only for debugging. That file made it possible to rebuild around 512,000 lines of TypeScript that run Claude Code’s orchestration layer and CLI. Not long after, reconstructed copies started appearing on GitHub as developers dug through the code.

Anthropic confirmed the incident in an email, stating that it resulted from a packaging mistake rather than a security breach. The company said a Claude Code release unintentionally included internal source code but emphasized that no sensitive customer data or credentials were exposed. Anthropic added that the issue was caused by human error and that new measures are being introduced to prevent similar incidents.

The leaked source map reveals key challenges in long-running agentic workflows, such as context drift, reliability, and autonomous operation. A closely examined feature is a layered memory system that differs from basic “log everything and retrieve later” designs. In this setup, a MEMORY.md file remains in context as an index of pointers, while project knowledge is distributed across topic-specific files that are retrieved only when necessary.

Developers examining the implementation characterize it as a “self-healing memory” model. The agent updates its index following successful writes and treats cached memory as advisory instead of authoritative. This design prompts the system to revalidate assumptions against the live codebase prior to execution.

The source also repeatedly references KAIROS, a feature flag appearing more than 150 times that supports Claude Code’s always-on “daemon” mode. With KAIROS enabled, the agent can continue operating in the background rather than waiting for prompts, consolidating memory and resolving contradictions while the user is idle. Logic associated with an “autoDream” process shows the agent merging observations, pruning inconsistent states, and rewriting vague notes into concrete assertions, using a forked sub-agent to avoid affecting the primary reasoning thread.

The leak also revealed information about Anthropic’s internal model roadmap and quality issues. Codenames such as Capybara, Fennec, and Numbat appear to refer to Claude 4.6-class variants and experimental versions. Comments suggest the current Capybara v8 iteration has a false-claim rate in the high 20 percent range, higher than previous releases. The stack includes guardrails like an “assertiveness counterweight” to limit overconfident refactors and noisy diffs, indicating ongoing efforts to balance speed, verbosity, and factual accuracy.

Among the more contentious discoveries is an “Undercover Mode” that enables Claude Code to contribute to public open-source repositories without attributing activity to Anthropic. The system prompt explicitly instructs the agent to operate undercover and omit any Anthropic-specific details from commit messages. The implementation effectively serves as a reference design for organizations aiming to deploy AI agents in public ecosystems while obscuring internal tooling and model provenance.

For most users, the bigger concern isn’t the leaked code but how it lines up with a separate npm incident. On March 31, 2026, attackers briefly pushed two malicious Axios versions 1.14.1 and 0.30.4, that included a remote access trojan. Projects installing Claude Code from npm could pull them in transitively. Researchers recommend checking lockfiles for those versions or the injected dependency, plain-crypto-js. If they’re found, the system should be treated as fully compromised, with secrets rotated and the OS reinstalled.

Anthropic is guiding users toward its native installer, a standalone binary distributed through a curl-and-bash script, as the primary channel moving forward. The company says this approach avoids npm’s volatile dependency graph and enables automatic security updates. Users who remain on npm should remove the leaked 2.1.88 build and pin installations to a known-good version until patched releases are provided.

Organizations are being advised to reinforce operational safeguards. Suggested steps include applying a zero-trust model when executing Claude Code in unvetted repositories, auditing hooks and configuration files, rotating Anthropic API keys, and monitoring usage telemetry for anomalous activity now that the agent’s orchestration logic is publicly available.

Maybe you would like other interesting articles?